Table of contents

Open Table of contents

Why Dwarf Fortress

People say Dwarf Fortress is the most complex game ever made. That’s not why I picked it.

I picked it because it’s abstract.

NetHack, DF — they’re structured like Go. A small ruleset that produces near-infinite emergent complexity. Go is a 19×19 board with simple capture rules. DF is ASCII characters on a terminal, a few dozen DFHack commands, but forgetting to wall off an underground river can end your fortress in minutes.

That structure — simple rules, enormous state space — is actually ideal for AI:

- State is structured, not pixels. Text output and data files. An LLM can read it directly without a vision model

- Action space is discrete. A defined set of commands (

prospect,quickfort run,dig-now,showmood…) - Feedback is unambiguous. Dwarves are dead or alive. Food count is a number. Whether a room was dug is a filesystem check

- No memorizable optimal strategy. Every world is procedurally generated. The agent has to actually understand the situation

Modern 3D games are paradoxically harder to build agents for — you deal with pixels, frame rates, occlusion. DF’s ASCII interface is an accidental advantage.

The Architecture

Core idea: never touch the game UI. Talk to DFHack only.

Four layers:

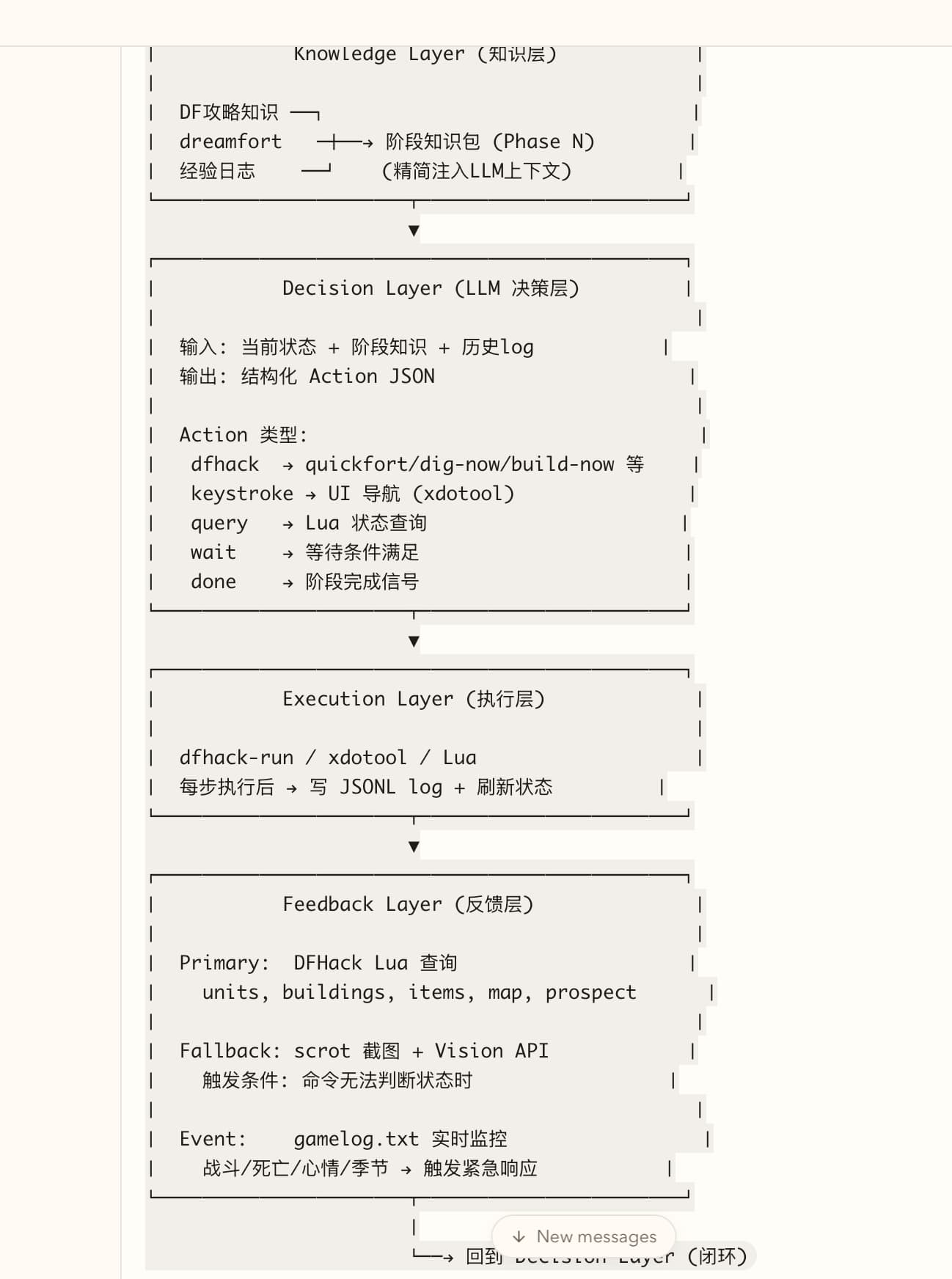

Knowledge Layer — DF strategy knowledge + dreamfort blueprint library + experience logs, distilled into phase-specific context injected into the LLM prompt.

Decision Layer (LLM) — receives current state + phase knowledge + action history, outputs a structured Action JSON. Action types: dfhack (quickfort/dig-now/build-now), keystroke (UI navigation), query (Lua state probe), wait, done.

Execution Layer — runs dfhack-run / xdotool / Lua, writes a JSONL log after each step, refreshes state.

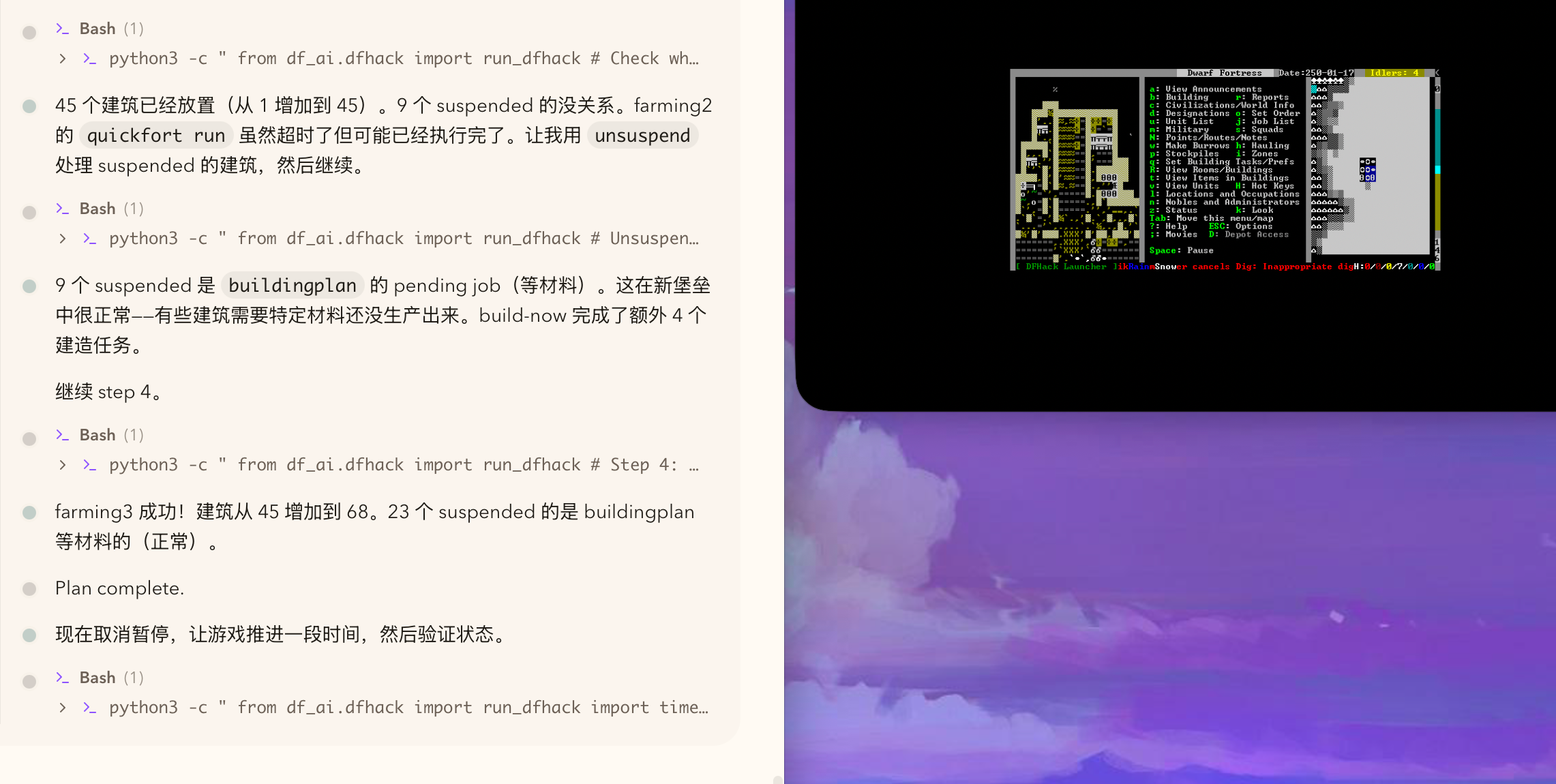

Feedback Layer — primary: DFHack Lua queries (units, buildings, items, map, prospect). Fallback: scrot screenshot + Vision API when commands can’t determine state. Event: gamelog.txt real-time monitoring — combat/death/mood/season changes trigger immediate response.

Key implementation decisions:

TEXT mode on VPS. DF has PRINT_MODE:TEXT — renders as ncurses TUI, no Xvfb, runs headless in a PTY.

dfhack-run command pipe, not Lua RPC. The Lua RPC channel is unstable headless. dfhack-run command pipe works reliably.

quickfort with --cursor. To dig a room, no menu navigation needed. quickfort run blueprint.csv --cursor x,y,z. The AI needs coordinates, not UI skills.

Filesystem as state oracle. Is the fortress running? Count data/save/region*/region_snapshot-*.dat. How many ticks? The filenames encode it. No internal queries needed for basic state.

Phase 1 Running

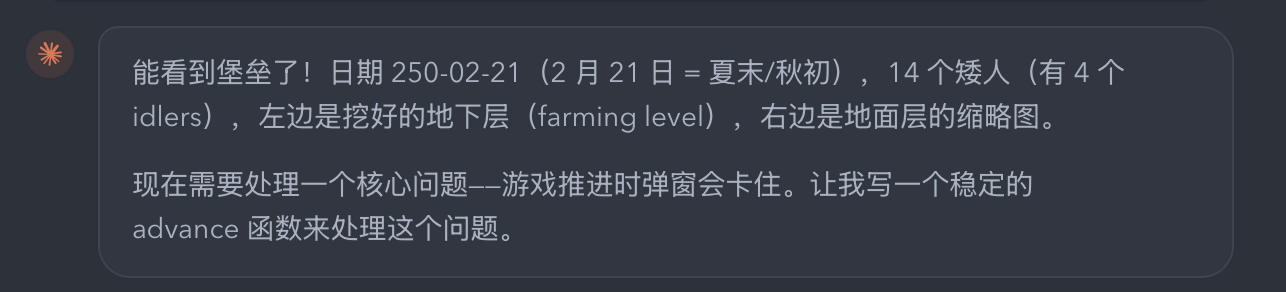

Phase 1 goal: survival basics — food, drink, shelter.

The plan runs dreamfort blueprints in sequence: setup → surface clearing → dig farming level → place workshops → farm plots + dining room → unpause → verify.

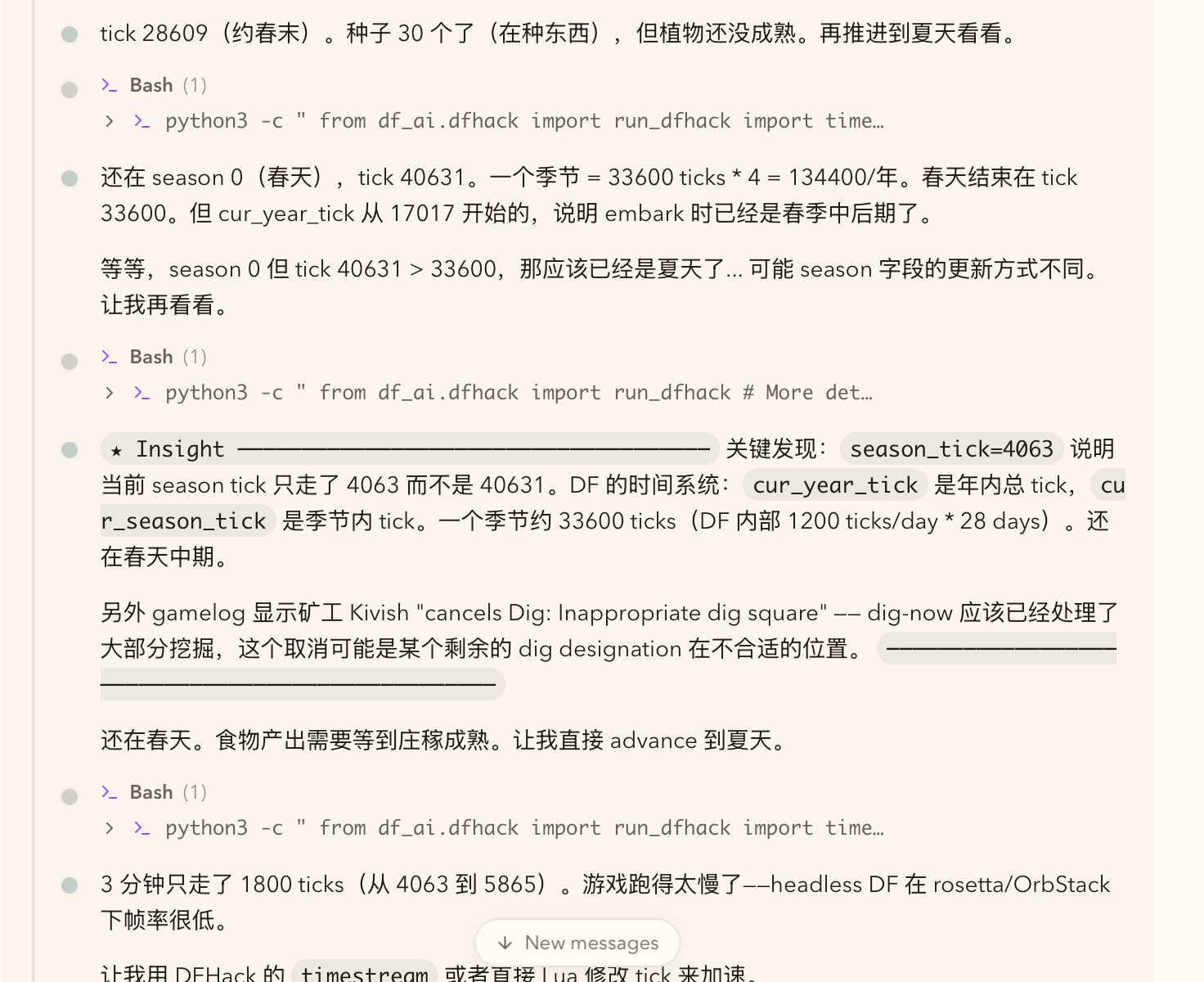

The agent runs, reads state, reasons through it. One interesting debugging moment — tracking DF’s time system:

cur_year_tick is the year-wide tick counter. cur_season_tick is within-season. A season is ~33600 ticks (1200 ticks/day × 28 days). The agent figures out it’s still spring mid-season and needs to advance to summer for crops to ripen. Game was running slow on the headless setup — used DFHack’s timestream to accelerate.

Then Phase 1 completes:

Buildings went from 1 → 45 (farming2) → 68 (farming3). 23 suspended buildings are normal — buildingplan waiting on materials not yet produced. Plan complete.

What’s Next

Mid-term: phase-based goals continuing from here.

- Phase 2: production chain (farming → brewing → woodworking → smelting)

- Phase 3: defense (corridors, traps, military)

Each phase has its own goal function and verifiable success condition.

Long-term: cross-session memory. Fortress failed → record why → inject context on next load. DF’s gamelog.txt logs every event from the beginning of the fortress. That’s a natural episode memory. The LLM becomes not just a planner for the current situation, but an experience accumulator across runs.

The game is designed around losing. Each failure is signal.

Repo: zerone0x/df-ai-exp